We already talked about several forms of criticality. One was the generation of the action potential in neurons. Another was the behavior of Wilson-Cowan neurons when the population transitions into a global limit cycle resembling epilepsy. Yet another was ferromagnetic behavior under heat, when we talked about the relationship between the Hopfield network and the Ising model. And finally we saw a highly dynamic image of the Belousov-Zhabotinsky reaction, where each point in space is an oscillator and every point is coupled to every other point. We talked about limit cycles and bifurcations, and we looked at some examples in populations of neurons.

Criticality occurs at the transition into chaos, and chaos is defined as "extreme sensitivity to initial conditions". Criticality is related to phase transitions, and here the word "phase" means phase of matter (like ice-water-steam), rather than the angular relationship between two sine waves. The use of this word is problematic because of its ambiguity, and I'll try to be specific each time it's used. In neurons, the word "phase" conveys both usages, phase of matter insofar as a populations of neurons is oscillating or bursting rather than passively processing error signals, and dynamic phase in that the phase of the oscillating population is important for coupling between oscillators (a concept that ties in directly with the dynamic assembly theory of cortical function).

In neural networks, the amplitude, frequency, and dynamic phase of an oscillating population can be controlled by neurons, as can the coupling between nearby oscillators. This activity can be controlled in real time by neuromodulators, and synaptically with or without plasticity. An example is the entrainment of local theta-type oscillators in the hippocampus, by a spatially organized theta wave originating in the medial septal nucleus. Any time we have dynamics, it's a good bet there's something encoded in the dynamic. We've already talked about phase encoding and what it's good for, now let's look at it in a slightly more advanced way. We'll introduce the concept of wavelets, and wavelet transforms.

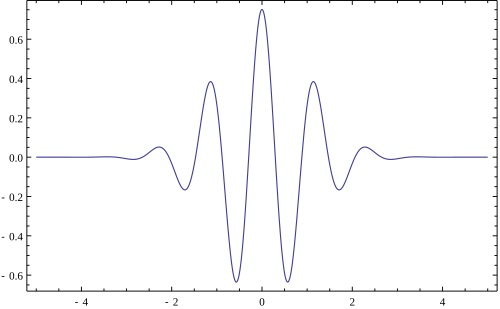

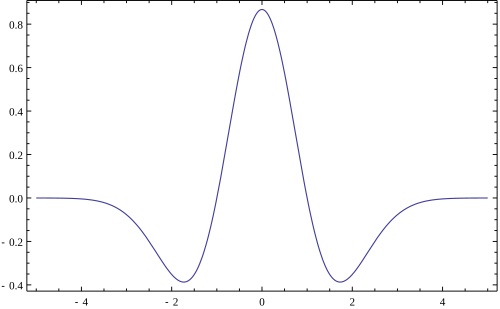

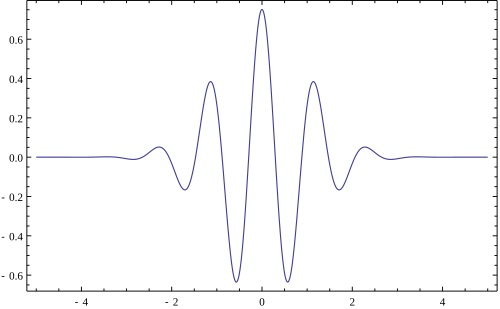

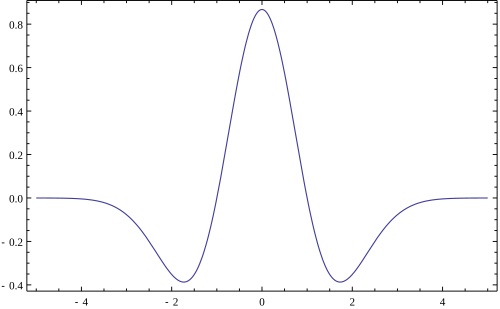

Wavelets and Signal ProcessingIn signal processing, one of the most common mathematical tools is the Fourier transform. Roughly speaking, the theory is that any signal can be represented by its period components, like sines and cosines of various frequencies, with various amplitudes and phases. The Fourier transform is a powerful tool, but it loses the origin. It makes the assumption that the signal is infinite, going on forever in both directions. In the conversion to the frequency domain, some of the time related information is lost. Wavelets solve this problem. A wavelet is shown in the figure. It's a small wave that's localized in both the time domain and the frequency domain. It provides both time and frequency information for a narrow window of the signal.

This is a wavelet, called a Morlet wavelet.

This is also a wavelet, that more closely resembles the Mexican Hat function we talked about in relation to retinal receptive fields.

A wavelet transform, means we're going to use an orthonormal basis of wavelets to decompose and analyze our signal, rather than an orthonormal basis of infinite sines and cosines. In practice, a continuous spectrum is not needed. A very reasonable representation of the input signal can be achieved with just a few wavelet frequencies. Usually five or six orders is quite sufficient. A wavelet can be handled like a convolution, that is to say, it can be slid over the signal like a filter - but in a neural network, wavelets can also be topographically hard-wired, into a network where the local processing modules form oscillators. Wavelets can be synchronized, from the outside. For example if we wanted a wavelet to give us temporal information to complement the spatial frequency analysis in the primary visual cortex, we could have each mini-column (or a portion of each mini-column) oscillate like a wavelet, thereby analyzing each point in the visual space by treating it like a time series. And the nature of neural wiring is such that nearby time series will associate with each other, forming distributed representations downstream that come to represent the statistics of the data (moving images). Wavelets give us the most important time and frequency domain information, for small areas in the visual field. And, when the time course of neural events is slightly longer like it is in the scene mapping areas around the hippocampus, wavelets can give us a local encoding and decoding of the spike trains related to theta waves.

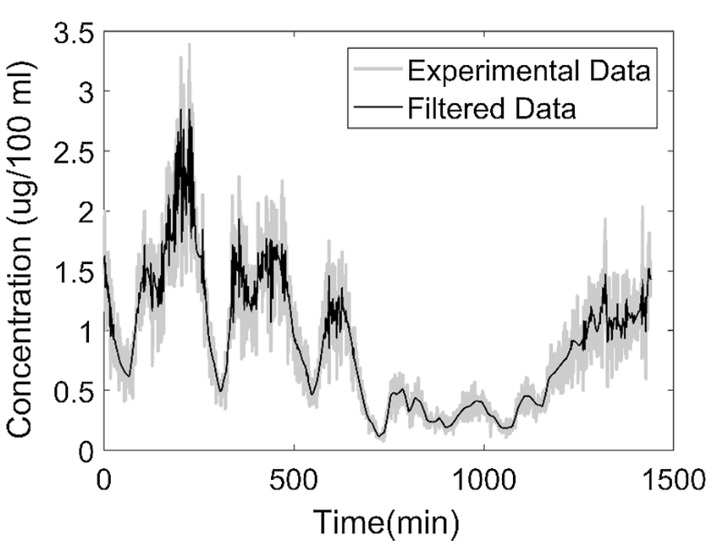

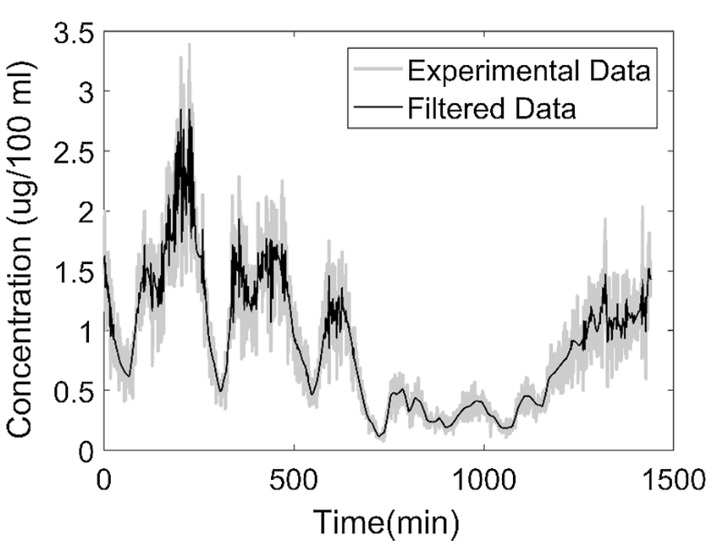

An elementary application of wavelets is in de-noising. The figure shows a time series de-noised by a wavelet transform.

(figure from Pillai et al 2018)

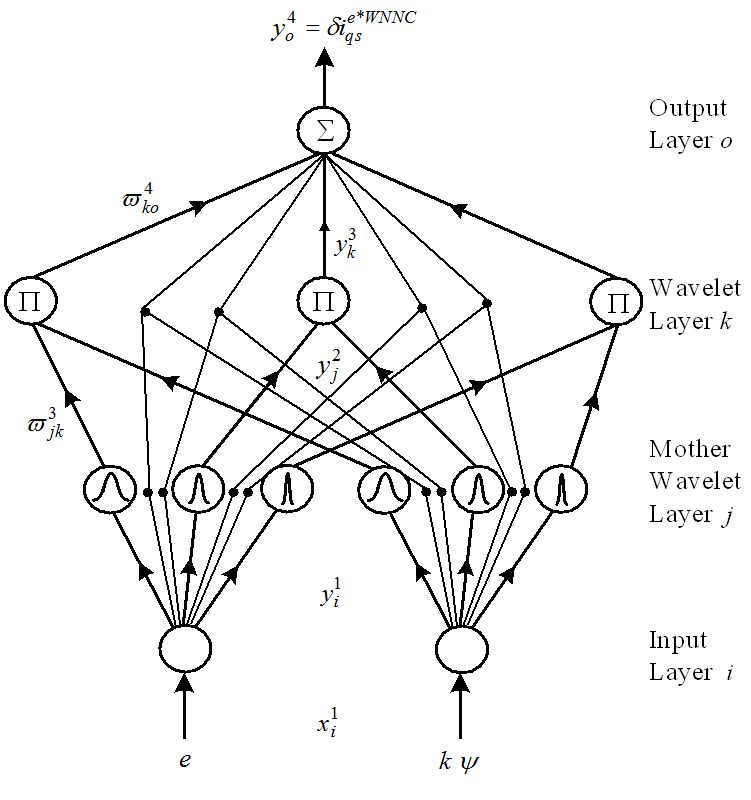

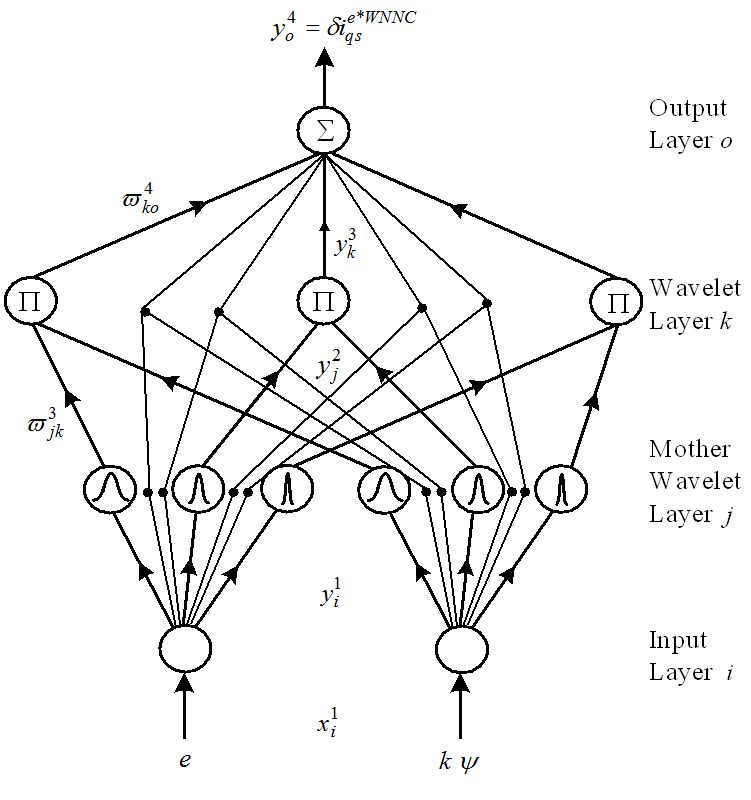

Wavelet transforms are amenable to the natural structure of neural networks. This figure shows a wavelet transform implemented by a neural network. In a wavelet transformation there is usually a cascade of wavelets derived from a "mother wavelet" with the lowest frequency, and that's what this network is implementing.

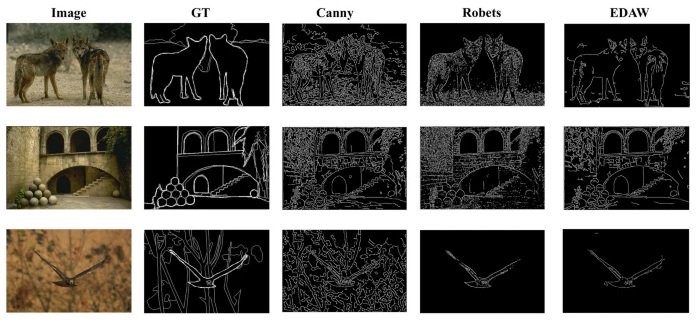

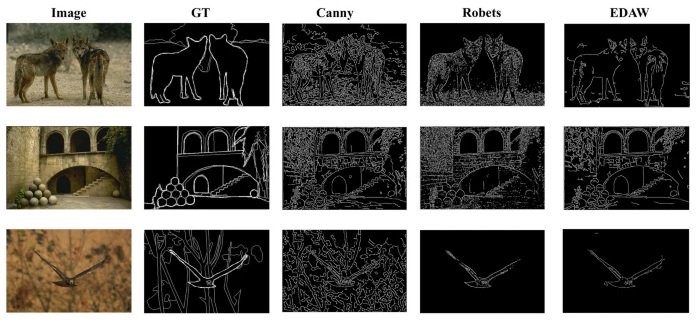

This figure shows the power and accuracy of wavelets. In this experiment, noise has been deliberately injected into some images, and various edge detection methods compared to see which performs better in real life (noisy) conditions. The wavelet version is on the right. Note how the other methods leave plenty of noise, whereas the wavelet method is accurate and delivers a form of automatic gain control for details.

(figure from Li & Xu 2025)

Wavelets and Neural NetworksThe central concept of a wavelet filter is that it travels along a portion of the time series. In a neural network, the time series is a spike train, or some form of population activity. A self-sustaining oscillator in a neural network, by its very nature, generates wave-like activity, and in a topographic network these waves will likely become traveling waves because of the connectivity (which usually involves recurrent positive feedback - this is certainly the case in the hippocampus and the cerebral cortex). The lateral connectivity is one of the reasons for entrainment and one of the reason for the coupling of oscillators, and thus contribute to the long range interactions described in the subsequent section on thermodynamics.

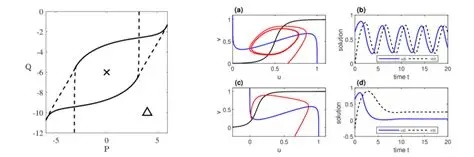

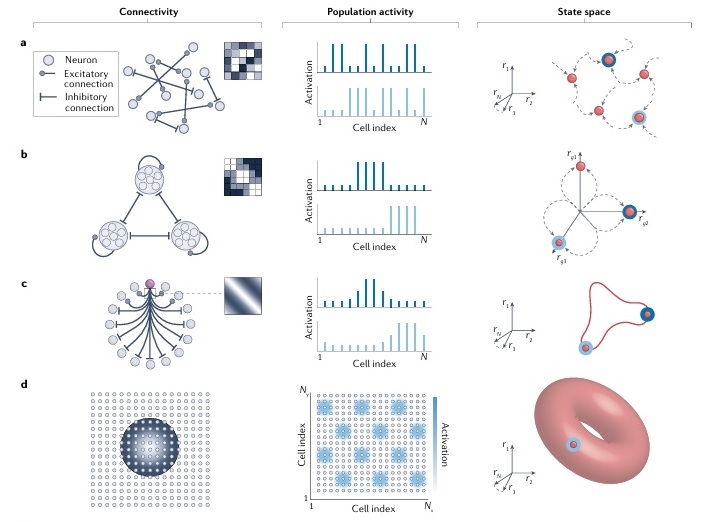

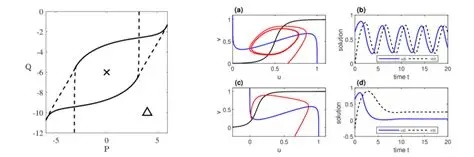

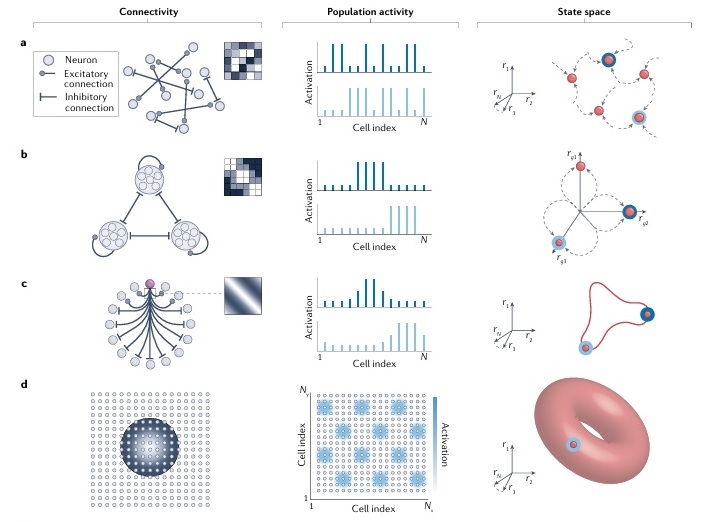

In the hippocampus, there is a broad range of electrical activity at frequencies beginning in the low delta range (about 2 Hz), and extending into the upper gamma range (about 100 Hz). Activity in the theta range, around 5 to 7 Hz, couples with gamma in the prefrontal cortex. And gamma in the prefrontal cortex is coupled with gamma in other areas of the cortex. In this case "coupling" means coherence, there is a stable phase relationship between the signals. Neural oscillators can be synchronized in many ways, we looked at some of them in the section on neurons. Some of this synchronization is very specific, like subthreshold oscillations in the high gamma range can be reset by synaptic inhibition, and if the inhibition is occurring at a theta frequency then this provides a mechanism for phase-related coupling. There are other ways coupling can occur. In the section on the visual system we talked about basic connection motifs. Here are some more of them. Each of these simple motifs in the figure is capable of generating limit cycle behavior in the phase plane - that is to say, oscillations. These dynamics tie in directly with the discussion of information geometry later in this section, as shown neatly in part (d) of the figure. In part (c) is a ring attractor, which is a ubiquitous arrangement in biological networks.

(figure from Khona and Fiete 2022)

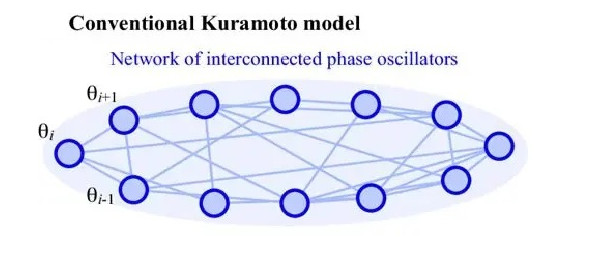

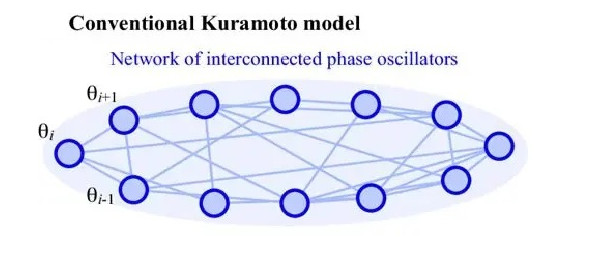

A simple example of coupled oscillators is a pair of pendulums. Oscillations that begin as independent will tend to synchronize their phases on the basis of small bits of energy that transfer back and forth. The same is true in neural networks. A general model for coupled oscillators of the same frequency was developed by Yoshiki Kuramoto in 1975. It has the form

dPi/dt = Xi + f(j, Kij sin(Pi-Pj))

where Pi is the phase of the i-th oscillator and the K are "coupling constants" between the oscillators.

This description, while loose, is adequate to illustrate many of the fascinating dynamic behaviors that can emerge from these networks. The possibilities within the Kuramoto phase plane are so rich they're still being studied after 70 years. Chaotic behavior is easy to accomplish, and there are behaviors reminiscent of some of the notable achievements in nonlinear thermodynamics, like the spatial patterns in the Belousov-Zhabotinsky reaction.

All manner of interesting dynamics can be seen in these phase plane visualizations. Bifurcations are evident, along with chaotic regions and regions where the system response is nearly linear. The Kuramoto paradigm is capable of generating just about any trajectory imaginable. The real question becomes how to control the network to obtain the desired dynamics. Fortunately, coupled oscillators map directly into information geometry, and therefore back down again into dynamics and synaptically controllable network plasticity.

If we momentarily set aside the issue of the mechanics around the dynamic, we can ask ourselves what we can actually do with it. One of the most important uses of wavelet transforms is extracting (decoding) the spike train from a modulated signal. In a neural network, specific architecture is required to process fast signals, most of the time neural networks are inherently slow, the neurons operate in the millsecond range and the synapses aren't any faster. To get delay lines of the form discussed in an earlier section, specific architecture is needed. One of the things phase encoding allows us to do, is individuate temporal information with a minimum of specific architecture. We can't devote a delay line to every piece of visual information, there isn't enough room in the brain! Instead what we do is encode the information, compress it in such a way that it can be efficiently represented and efficiently stored in memory. That's what phase encoding and wavelet transforms are for.

Now... there is an entirely different way of looking at wavelets, that only involves the statistics of the underlying signal. It turns out that the wavelet doesn't have to be regular - it could in fact be white noise with a particular envelope. In this case the wavelet becomes a "test function" for white noise analysis. This is where the concept of "wavelet" begins to introduce the issue of information representation, which leads eventually to information geometry. Testing a signal (or a system) with noise is the same as Volterra analysis, the kernels expose "memory" in the system, times that influence future times, and delays associated with signal processing. Since these become parameters in parameter space, the issue once again becomes the representation of the information. An excellent example is provided by the Bloch sphere in quantum computing. This is a "representation" of a parameterized probability space. We can conveniently visualize it because the laws of probability dictate that everything has to add up to 1. Everything has to be 1, so we have a radius of 1 in every direction and a vector of length 1. Easy stuff. More importantly though, the representation is useful mathematically. It describes the state of any photon as a length and two angles. That's pretty good information compression. It's taking an entire spectrum of sines and cosines and probability amplitudes and mapping the whole thing down to a length and two angles. The same thing happens in phase encoding, and wavelet transforms are the way we read it out. In a real-time neural network, the vectors on the Bloch sphere are constantly moving. The wavelet transforms are what convert the movements (the changes in the length and two angles) back into something we can easily visualize and use - a vector. Neural networks love vectors! They're easy to handle, good for encoding and good for GPU's. In the higher dimensional case we use tensors... same thing, just more commas.

Wavelets and CriticalityWe talked about criticality, but we never really mentioned what it's good for, in terms of any additional computational capability it might bring to a neural network. Examples abound of the benefits of criticality for certain kinds of computations. In our case, we're probably specifically interested in things like Bayesian optimization, which is an essential task performed by neural networks that keeps the belief system aligned with experiential evidence. In certain cases, computations carried out in a chaotic context significantly out-perform traditional methods like least-squares, and achieve much greater accuracy and precision. An excellent example is provided by Pillai et al 2018 in a pharmaceutical context related to molecular kinetics. In this case the chaotic computations were applied on a grid, in much the same way they might be in a neural network.

The coupling of oscillators is another important aspect of chaotic behavior. Assemblies (circuits) of neurons are in general multi-stable, and sometimes it's useful to think in terms of the amount and degree of chaos. A transition to an oscillatory or bursting state can be immediately detected by wavelet filters downstream. This type of filtering is especially useful for managing "hot spots" in a visual representation. If hot spots drive an assembly into criticality, then we expect that nearby neurons will tend to be dynamically recruited more easily than neurons that are far away, just on the basis of neural connectivity. Thus we expect to see "regions" of chaos in the network, and where those regions are will form a map of the hot spots. This map can then be fed to an attention network, which can efficiently relate the information in the hot spots without having to relate irrelevant input in the rest of the network.

Let's delve into the issue of representation. We already looked at several versions of it, the pattern of activity in a topographic network, the weights in a synaptic matrix, the position of a signal along a delay line, and the spike train associated with a phase encoded place map. These things need to be made consistent. The brain is the same all over, the cerebral cortex has the same architecture in front and in back. There are some major systems though, where the encoding probably changes, because the architecture is so different that it can't work the same way. An example is the organization of spiny stellate cells in the striatum. They get input from just about every area of cortex, and they're responsible for selecting and elaborating motor behaviors. This suggests they should be closely related to the "graphs" we talked about earlier.

Next: Representation

|