Psychologists often categorize memory into iconic memory (high-capacity and very brief, raw and not yet processed), working memory (pre-episodic but already processed in many ways, lasting perhaps a fraction of a minute to a few minutes), episodic memory (short term memory, lasting anywhere from half an hour to a couple of days), and long-term memory (permanent, after consolidation). When talking about memory, we must be specific. The word "memory" has a specific meaning, it does not equate with plasticity. In many cases the two are intimately linked, but they're not the same. Technically, memory is a dependence on prior times, so instead of f(t) we have f(t,t-1,t-2...t-n). Whereas, plasticity is the algorithm that changes the parameters inside f, over time and depending on the input.

In humans, memory is a layered process with different stages involving different time courses. Iconic memory is almost instantaneous, and disappears almost as quickly. In the visual system it is most closely associated with the persistence and disappearance of visually evoked activity in the primary visual cortex (V1). Iconic memory is functionally related to the occurrence of afterimages, a phenomenon also called "visual (or visible) persistence". Iconic visual memory was first documented and studied by the pioneering psychologist George Sperling in the early 60's. In the 80's, researchers discovered that visual persistence and informational persistence could be experimentally dissociated (Di Lollo 1980).

Short term (working and episodic) visual memory is organized by the same brain areas that handle scene reconstruction. Through attentional mechanisms, specific portions of the visual information are selected from the iconic representation and transferred into the scene map. There, additional information related to context is introduced, but that information is kept separate and does not become part of the scene. Multiple scenes can become an "episode". At the end of an episode, it appears that consciousness is flushed and working memory is transferred into non-conscious episodic memory. Consolidation into long term memory takes hours, even days. The process of memory consolidation is not well understood. One of the requirements is that only essential information gets stored, but as distinct from machines, humans only require one presentation to retain an image forever. Information does fade, it does decay - but the time courses of the decay are set up so they're "longer than" what is generally needed in real world biological settings. (The same is true for the integrator in the oculomotor system, which only has to deal with delays on the order of a few milliseconds but has a time constant in the 20+ second range).

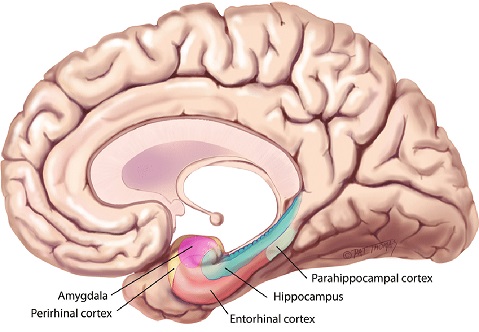

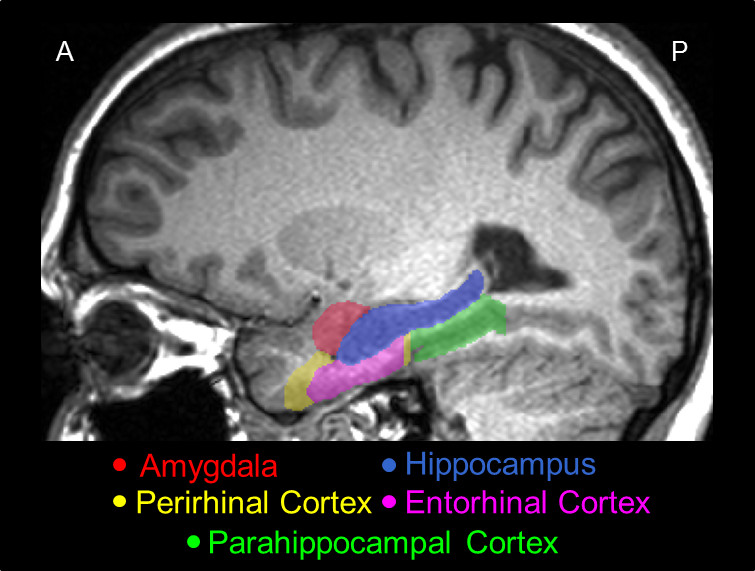

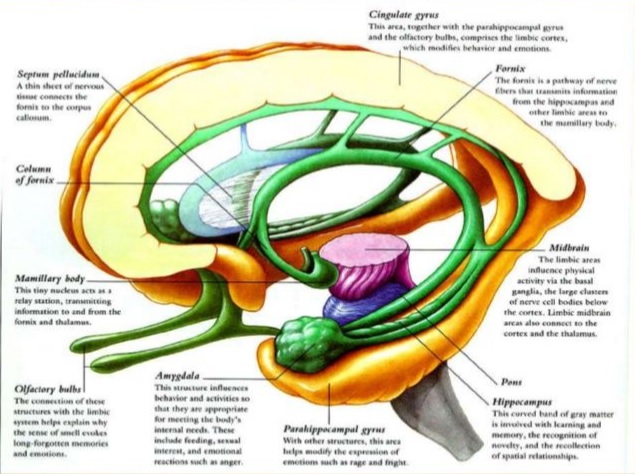

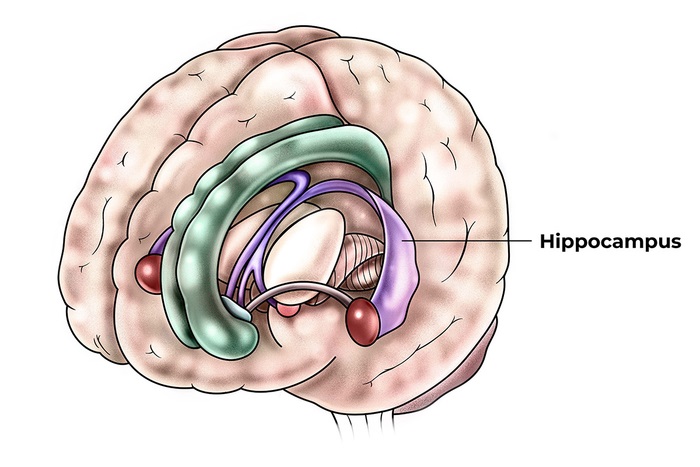

In the process of scene and memory organization, visual content is guided towards the hippocampus, by pathways from both visual streams into the entorhinal cortex and surrounding areas (parahippocampal cortex, perirhinal cortex, etc). There, scene information is "phase encoded" relative to a theta rhythm organized in the medial septal nucleus. It is still unclear whether the septal rhythm is a dependency or merely a driver, since many brain areas can generate their own rhythms locally. However the net effect is to synchronize activity in the population of hippocampal neurons. Much like the visual cortex, scene reconstruction seems to sample the input space. The hippocampus negotiates with the prefrontal cortex for contextual information related to the scene. This information includes both the relationships between objects, and the value of an object. After an episode consisting of multiple scenes, the memory is somehow consolidated into a long term representation accessible through the global store. No one yet knows how this occurs. At least a part of it can occur during sleep, which is a complex subject beyond the scope of these pages. The important part for us, is that information from multiple scenes is phase-encoded into spike patterns that represent an episode. The aggregate activity in such spike patterns is a hot topic for research. Let's see if we can get a better feel for what happens in the scene mapping and episodic memory circuitry around the hippocampus.

Circuitry Around The HippocampusOne of the famous cases related to memory and the hippocampus is patient HM (1957), who lost the ability to consolidate episodes into long-term memory after bilateral removal of the hippocampus for intractable epilepsy. He was fine until consolidation, he could function perfectly well through the episode itself, but after a few minutes he had no recollection whatsoever that the episode had ever occurred, and he completely forgot anything new he learned during the episode (Scoville and Milner 1957). HM was studied by Brenda Milner, who at the time was working under Donald Hebb, around the same time that Wilder Penfield was performing his famous experiments with brain stimulation at McGill University in Montreal (Squire 2010).

Gradually over the years it became apparent that visual memory is not just a passive recording system, it involves active reconstruction. This information originally came out of psychology experiments performed in the 30's, by Frederic Bartlett (1932) and Carmichael et al (1932). This view was later reinforced in an interesting way by Loftus' work with eyewitness testimony (1975). Visual working and episodic memory is resource-constrained, it is limited in capacity. Only the most important information, as determined by attention, can be stored with precision (Bays et al 2024).

The visual streams come together at the level of the hippocampus. The circuitry around the hippocampus performs two essential functions: scene reconstruction (which helps us navigate), and short term memory (which helps provide the context related to the scene). The memory process in the hippocampus is often referred to as "episodic" because it pertains to a series of events that are related in time.

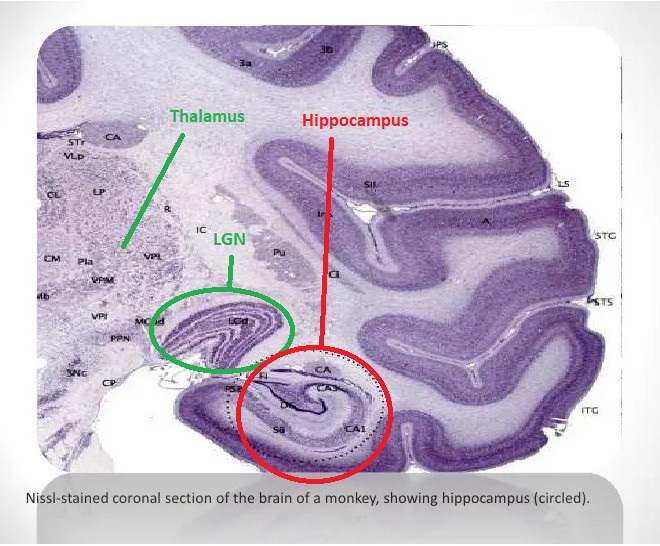

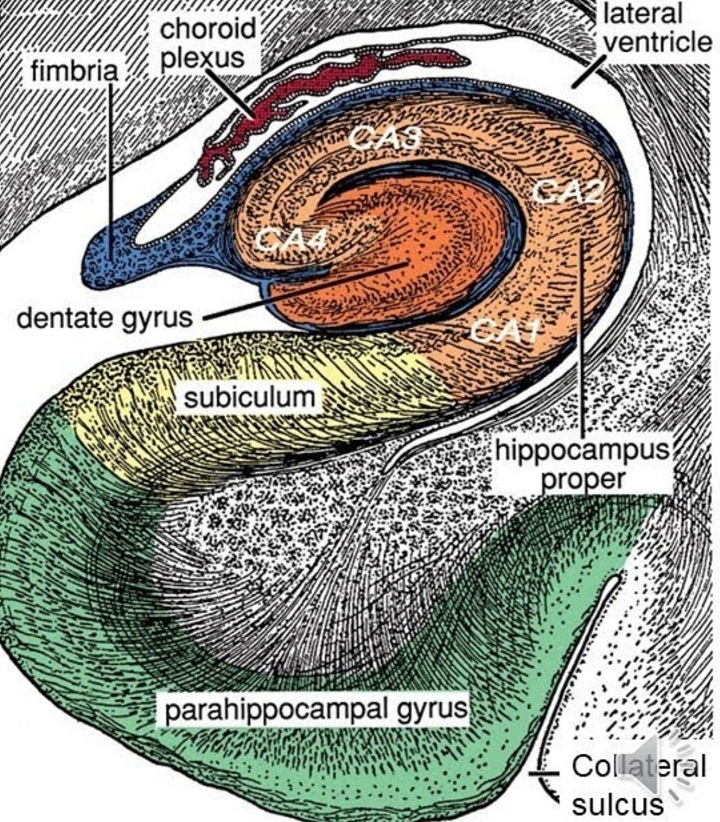

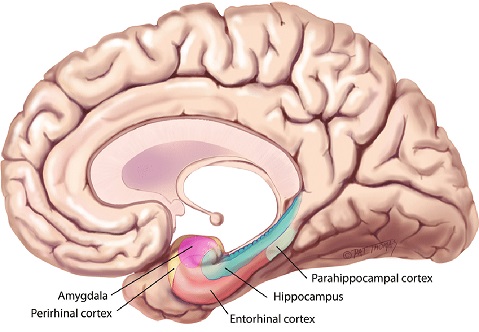

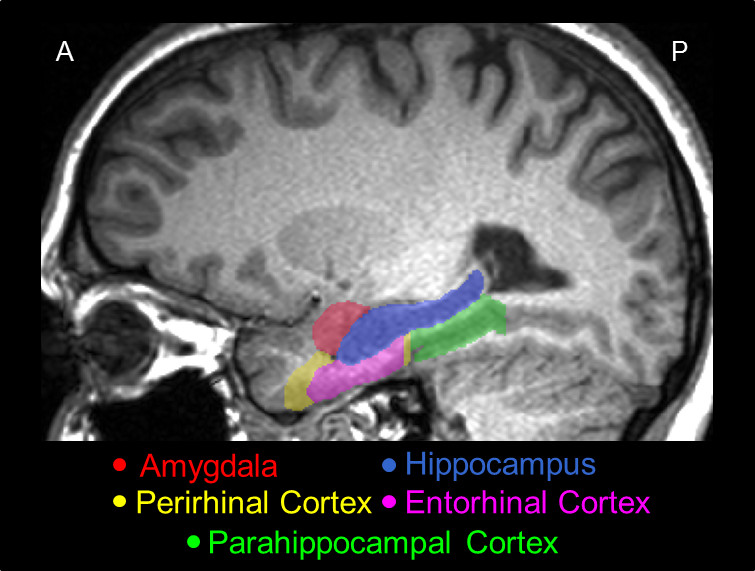

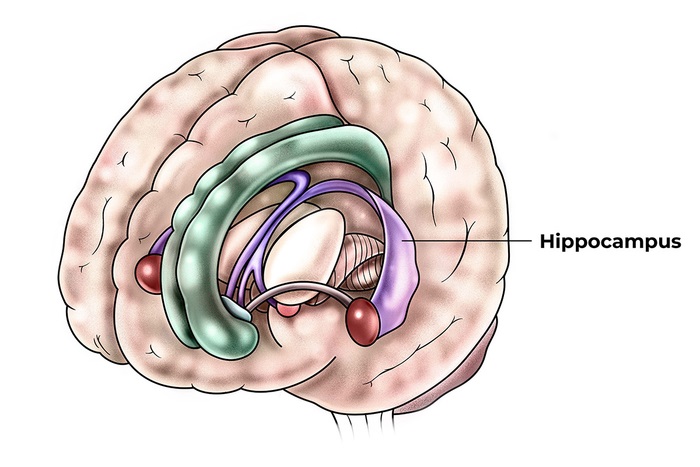

The hippocampus is much too complicated a topic to cover with any justice on these pages. There is a plethora of information about it online. The figure shows the major anatomical landmarks associated with the hippocampus. This is a medial view of the brain, showing the inside of the temporal lobe. Of particular interest is the entorhinal cortex, which receives input from both visual streams.

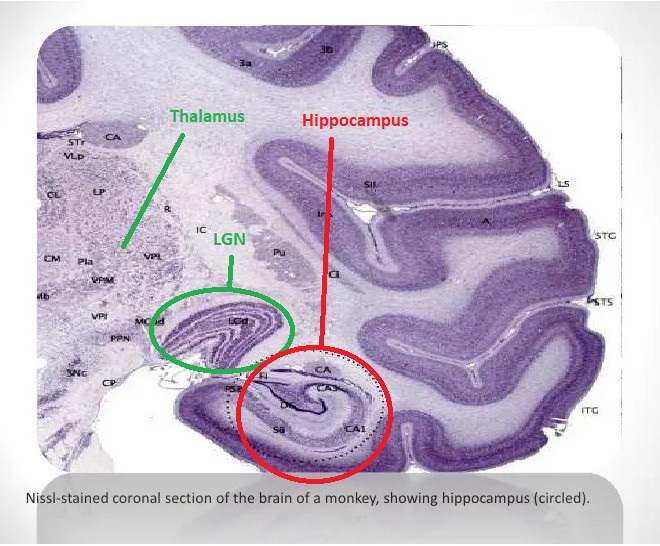

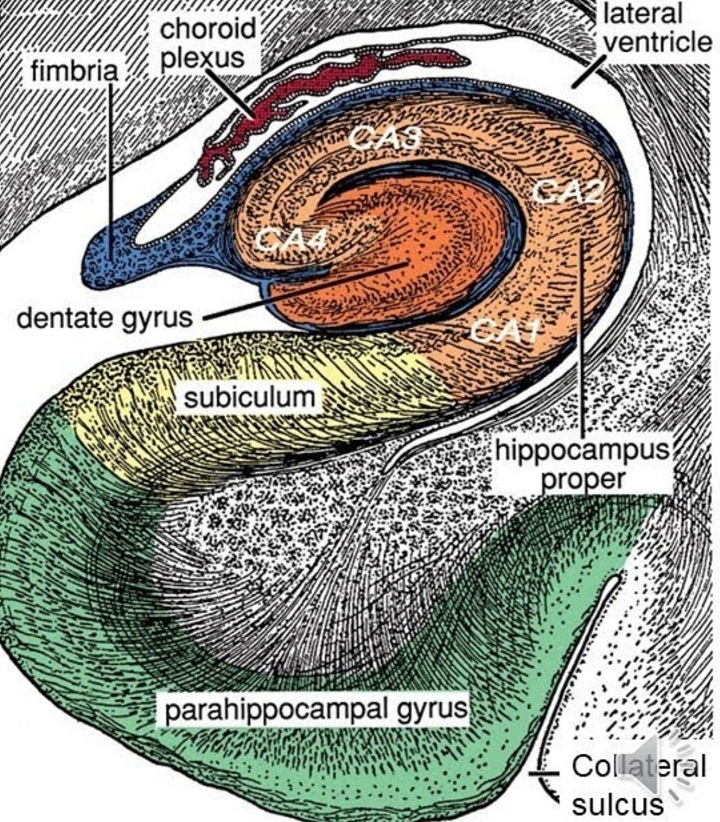

The hippocampus and surrounding areas have a complex architecture. These figures show some of the highlights.

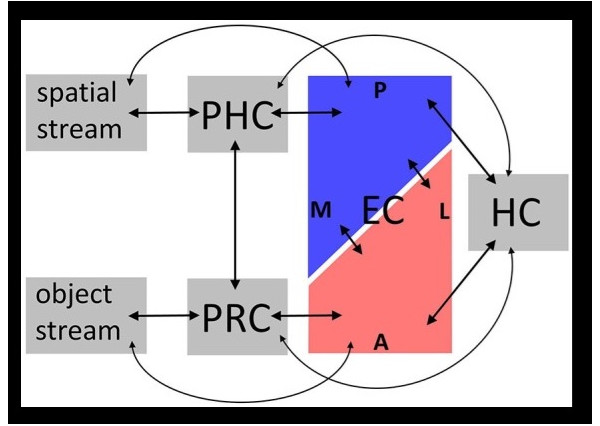

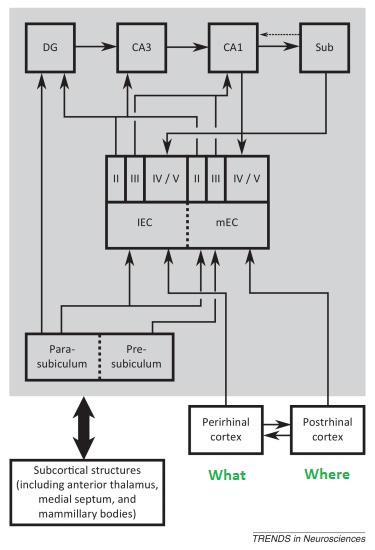

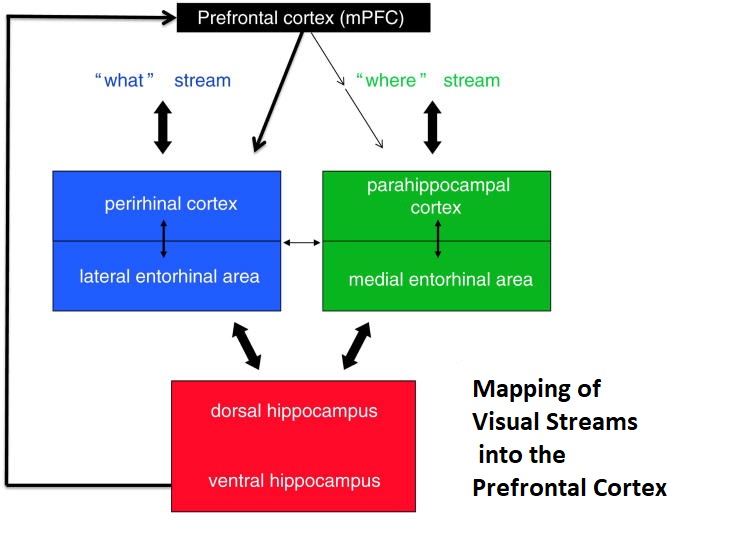

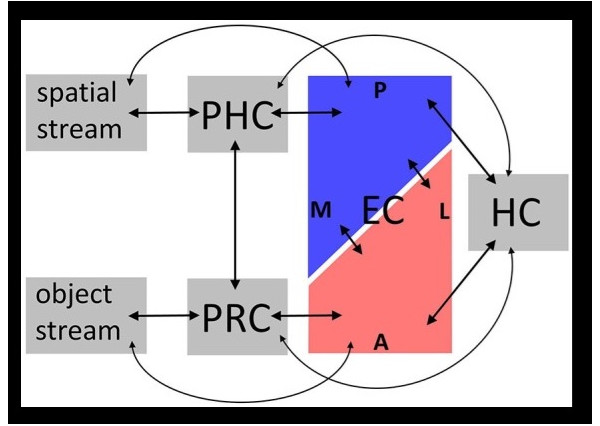

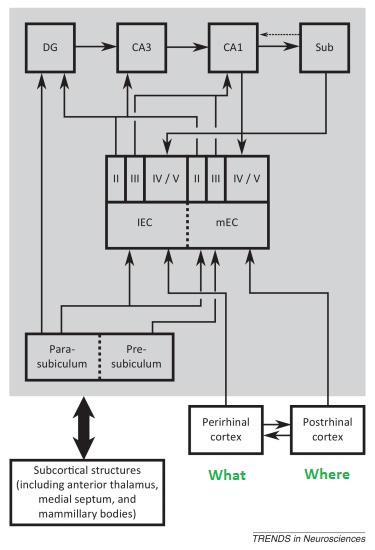

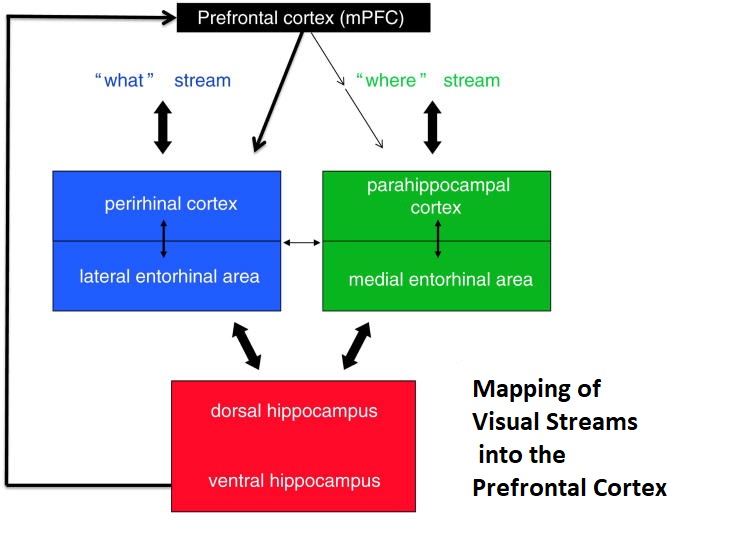

There is overwhelming evidence that the hippocampus and surrounding areas engage in scene reconstruction. To do this, information from the dorsal and ventral visual streams must be combined, we need to know both where an object is and what it is. Human brain anatomy supports this convergence. The ventral visual stream enters the perirhinal cortex and terminates preferentially in the lateral entorhinal cortex, whereas the dorsal stream enters the parahippocampal cortex and terminates preferentially in the medial entorhinal cortex (where the grid cells are, in layer 2). Both pathways feed the hippocampus in multiple ways, both directly and indirectly via the subiculum.

In turn, the hippocampus communicates with the prefrontal cortex, from which it derives contextual information related to the scene, and to which it sends encoded episodic information. The connection from the hippocampus to the medial prefrontal cortex is direct, monosynaptic, and unidirectional (Croxson et al 2005).

In real life the situation is considerably more complicated than this simple picture, fMRI reveals specific connectivity for regions between which the anatomical information in humans is sparse or nonexistent. For example there are reciprocal connections between the medial and dorsolateral prefrontal cortex (both areas being involved in working memory - see Barbey et al 2014), the latter being a key component of the central executive network as defined by fMRI, and receiving input from both visual streams as well as auditory and somatosensory cortex. The dlPFC in turn sends fibers back to the entorhinal cortex and the subiculum of the hippocampus. There is also functional connectivity between the entorhinal cortex and ventrolateral portions of the prefrontal cortex (Croxson et al 2005). Significant progress is being made in this area using the human connectome (Rolls et al 2022). A summary of entorhinal and hippocampal wiring up to the prefrontal cortex is shown in the figure.

(figure from Bush et al 2014)

In the entorhinal cortex and the hippocampus are the ingredients necessary for scene reconstruction. In this activity, the objects in the scene are localized relative to the organism, and mapped in an egocentric coordinate system in such a way that the organism can navigate. In such a map, the details of a particular object are only relevant insofar as they assist real-time behavior - they may not enter short term memory at all. When humans recall a scene we frequently forget the irrelevant information. Sometimes we can recall it when prompted, other times it's like it never got into storage. There is also abundant evidence that a translation between egocentric and allocentric spatial reference frames is performed in and around the hippocampal circuitry (Szczepanski and Saalmann 2013). The allocentric reference frame is needed to navigate, and the egocentric reference frame is needed for the associated limb and body movements. The parietal spatial areas and the frontal eye fields are organized in viewer-centric coordinates, while the supplementary eye fields and a portion of the superior parietal lobule may be organized in allocentric coordinates. In the entorhinal cortex and hippocampus, the encoding is clearly allocentric, because of the grid cells and place fields. The mapping from egocentric to allocentric reference frames has a lot to do with boundaries. In addition to the grid mapping the navigation space, the boundaries constrain the relative positions of objects, and generate a coordinate system that is reciprocal to the egocentric view. In the allocentric view, the position of the organism is mapped relative to the boundaries. This creates a "navigation space" within which the organism can operate. Part of the function of the attention system is to "explore the space", that is to say, define its construction and contents. Exploratory and reward-seeking behavior occurs by default when the organism is placed in a novel environment.

In addition to the two pathways directly related to visual objects in real time, there is a third stream that Rolls calls the "reward stream", it enters from the orbitofrontal cortex and anterior cingulate cortex, and holds information related to the "value" of an object, or an object relative to other objects ("scene configuration"). There are direct monosynaptic connections from the hippocampus to the nucleus accumbens, and this area is the primary target of dopamine fibers from the ventral tegmental area, and is reciprocally connected with it.

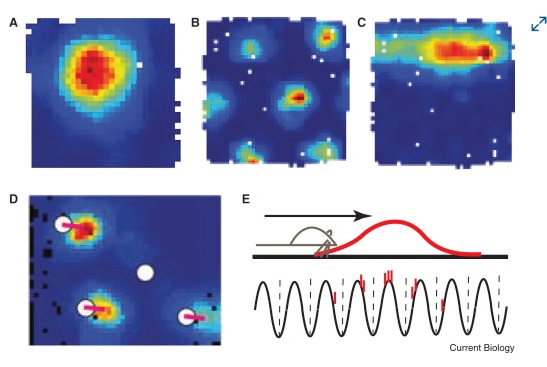

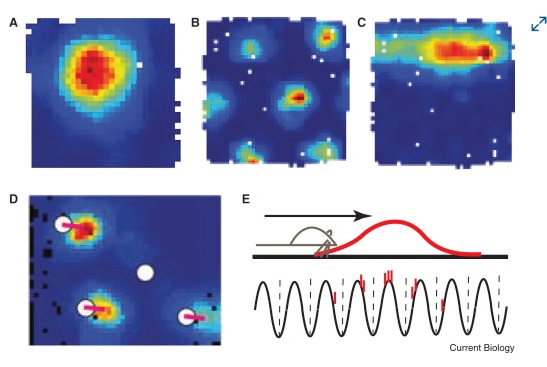

Visualizations of the scene mapping behavior in the hippocampus can be quite revealing. Here for example are the receptive fields of place cells in the hippocampus (a) and grid cells in the entorhinal cortex (b). The mechanism of phase encoding is shown in (e). The red portion is the receptive field of a place cell, and the bottom part shows its output after phase encoding against the theta rhythm. The result of phase encoding is that the position of the organism in allocentric space comes to be represented by the position of the spikes in a spike train. Thus as the organism navigates, a snapshot of the spike trains represents (and reveals) where the organism is at any given time.

(figure from Knierim 2015)

These receptive fields change according to the scene. A place cell does not represent the same place all the time, it represents different places in different scenes. Place cell receptive fields can be "reset" by things as simple as an odor in the environment. Generally one can predict that they can be reset by any sufficient form of orienting (any time there's a new scene, or a new view of a scene - and in fact there is some evidence that place maps "rotate" along with the visual view - see Cone and Shouval 2021, Dong et al 2021, Kinsky et al 2018, Robinson et al 2020).

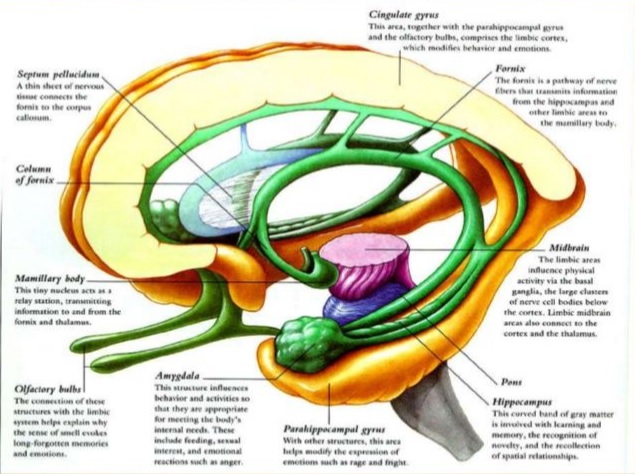

Memory and the Prefrontal CortexA memory is not just in "one" place in the brain. Bits and pieces of it are all over. The details of a memory seem to be stored in the areas that are most relevant to it, for instance visual information is stored in the visual cortex, and fear and threats end up in the amygdala. The Circuit of Papez was originally described as an emotional system, and later it became linked to short term memory, but now it seems that its primary role is neither of these. Its primary role seems to be memory mapping related to scenes. This circuitry includes the mammillary bodies which respond to head direction, and the anterior cingulate cortex which handles attention (among other things). The hippocampus projects massively to the prefrontal cortex, as does the mediodorsal nucleus of the thalamus, which is richly connected to almost the entire prefrontal area including the orbital portion.

Three major subsystems have been identified emanating from the hippocampal region (Aggleton 2011). One is a subsystem closely related to episodic memory, that originates in the subiculum of the hippocampus, and involves the anterior thalamic nuclei, mammillary bodies, and retrosplenial cortex. Another is a subsystem related to affect and social learning, originating from hippocampal CA1 and the subiculum, and involving the amygdala and nucleus accumbens. A third is a reciprocal hippocampal-parahippocampal subsystem originating from the length of CA1 and the subiculum, characterized by columnar connections with reciprocal topographies. In addition to these three, there is a massive parahippocampal-prefrontal system involved with context retrieval and familiarity. It has widespread hippocampal and thalamic connectivity.

It is impossible to divorce scene reconstruction from short term memory. This is (at least in part) because scene reconstruction requires context. In processing scene information, there is a bidirectional relationship with memory. The incoming information needs to be stored in memory, and contextual information related to the scene needs to be retrieved from memory. Both of these functions must necessarily involve the hippocampus, where scenes are represented allocentrically. "Objects" in a visual scene are time-independent in the sense that they're conceptual abstractions. The information related to objects in scenes is mainly their placement and their movements. Movement based on self-movement of the organism (head, neck, and eyes) must be separated from the movement intrinsic to the scene itself. Every view of the scene may be different, requiring the recall of new contextual information. These processes are coordinated by the interplay between the hippocampus and the prefrontal cortex.

A powerful form of information encoding happens at the level of the hippocampus. It's called "phase encoding" and it is intimately related to local and global rhythms. In the hippocampus in particular, it is strongly related to a range of delta and theta rhythms, which may occur in the 2 Hz to 9 Hz range and seem to increase in frequency as one moves dorsally. Phase encoding is a way of locally compressing information. It encodes temporal relationships between events, in a form that can be staged and conveniently replayed along the neural timeline. It enables a form of local temporal invariance that helps separate the objects themselves from the sequence of their relationships. Phase encoding is an efficient form of encoding information near the point at infinity on the timeline (Seenivasan and Narayanan 2020).

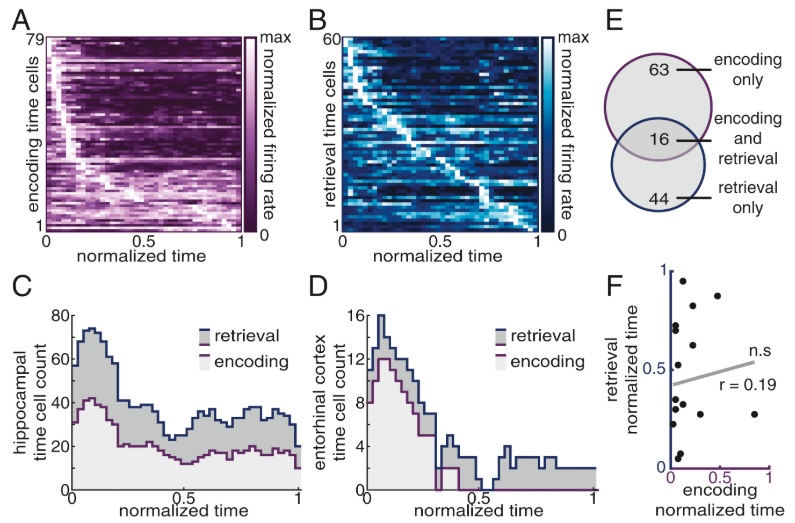

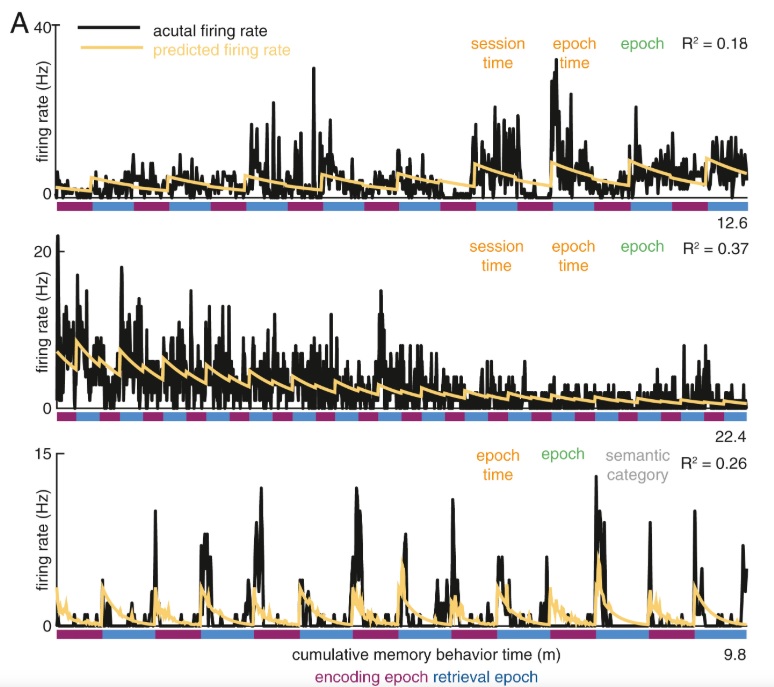

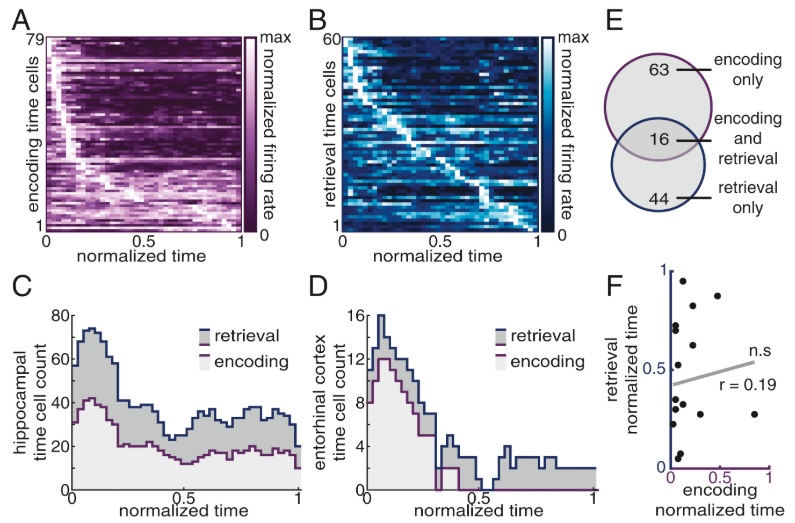

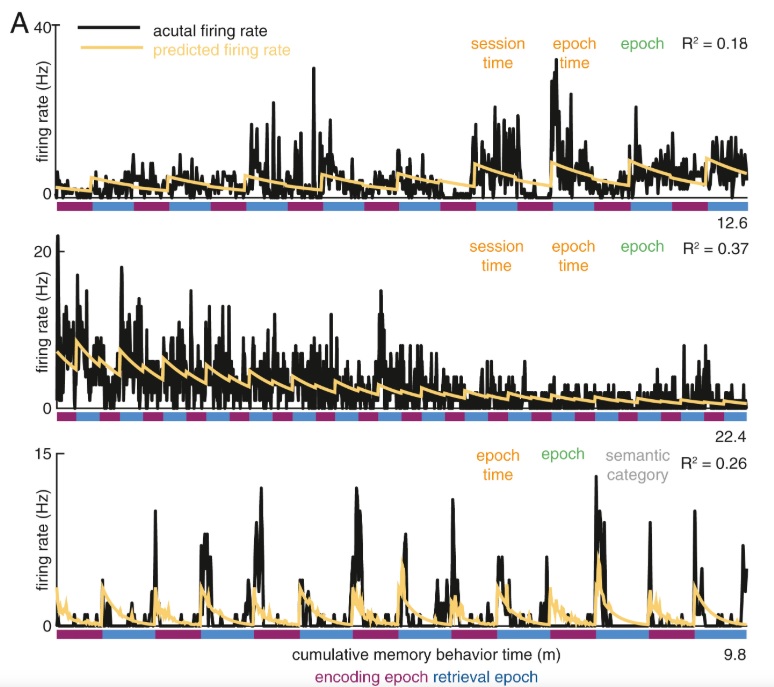

In principle, phase encoding is straightforward. It is a particular form of the "phase modulation" used in communications systems. This form of modulation is often deemed to be superior to amplitude and frequency modulation, in that it is computationally efficient and noise resistant. However radio engineers are keenly aware of the issues in encoding and decoding. In the radio context, decoding phase-encoded information requires complex circuitry (usually handled with phase locked loops). In a neural context, things are a little more forgiving. Phase encoding can in theory occur on a local basis, and again "in theory" the precise form of the encoding waveform need not be used to recover the original signal. The key part of the information that is actually being encoded in the brain, is relative positioning. And this can occur along either spatial or temporal dimensions, or both. In a place cell in the hippocampus, the phase of firing represents the position within the receptive field. In a time cell, the phase represents the occurrence of an event relative to some other event. The figures below show the behavior of time cells in the hippocampus and entorhinal cortex, during encoding and retrieval. One can see these cells signaling the beginnings of episodic epochs and ramping between them.

(figure from Umbach et al 2020)

(figure from Umbach et al 2020)

In the context of a timeline interpretation, this kind of encoding is important because it begins to map points in time into a window. "Events" (points in time) begin to include related activity in small intervals around the event. The firing pattern of each neuron ends up encoding a tiny patch of a larger manifold that represents a spatiotemporal sequence of experience. The sequence of experience is represented by spikes in a spike train. Engineers will quickly recognize this as a form of "information compression", since it is easy and straightforward to include only those events into the spike train that have been pre-filtered to be "significant".

Since information in the hippocampus is phase-encoded, it's a good bet that it's also phase-encoded in the prefrontal cortex. Incoming contextual information from the cortex should be presented in an accessible form to the hippocampus. This is definitely an area where machine learning can inform neuroscience. In particular, the exact nature of phase encoding in the prefrontal cortex remains a mystery. One of the big clues is that a phase map encodes the relationships between objects in both space and time - and in the case of plastic synapses, these relationships exist in the form of statistical correlations between spike trains. "Context" is, in fact, these relationships.

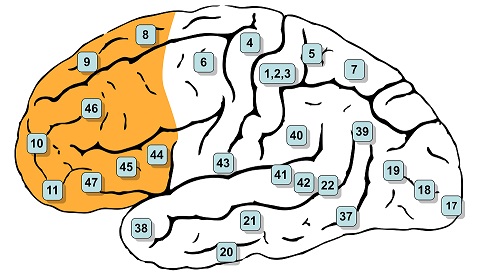

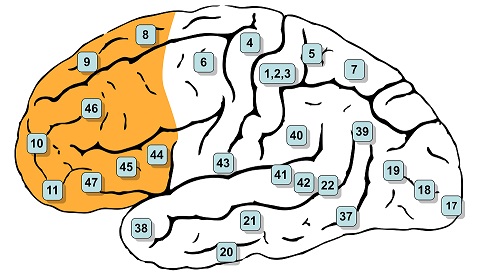

In humans the prefrontal cortex covers Brodmann's areas 8-14, 24-25, 32, and 44-47 inclusive, as shown. The classic historical definition of the PFC is the projection area of the mediodorsal nucleus of the thalamus (Rose and Woolsey 1948).

(image from Henry Vandyke Carter, public domain from Gray's Anatomy)

Fibers from the "where" visual pathway enter the parahippocampal cortex and connect with the medial entorhinal cortex. Fibers from the "what" visual pathway enter the perirhinal cortex and connect with the lateral entorhinal cortex. Fibers from both lateral and medial entorhinal pathways enter the hippocampus, where they remain segregated and connect in distinct manners. Parts of the hippocampus then connect with the prefrontal cortex through several pathways, some direct and some indirect, via the subiculum and other nearby structures.

(figure from Preston & Eichenbaum 2013)

The connections to and from the hippocampus maintain a topographic map along the anterior to posterior axis. Anterior areas are involved with emotion, they are close to both object-identification input and the amygdala. Posterior areas are more related to cognition and navigation, they include inputs from the place fields. Feeding the entire hippocampus is the medial septal nucleus, which generates a theta rhythm and also maintains topographic specificity. The theta rhythm moves along the hippocampal axis in the form of a traveling wave.

Fibers targeting the medial prefrontal cortex originate from intermediate and ventral CA1 and the proximal limb of the subiculum. There is a gradient of connectivity in the PFC, with ventral portions receiving dense projections in layers 2-6, and dorsal portions receiving sparser connections in layers 5 and 6. The connectivity is multi-faceted, in that different types of cells from CA1 project to different targets in the PFC. Some hippocampal CA1 neurons project to the contralateral hippocampus and send collaterals to the ipsilateral PFC, while others project to the ipsilateral nucleus accumbens and send collaterals to the ipsilateral PFC. Stimulation of hippocampal CA1 results in excitatory field potentials in mPFC. They consist of a large positive wave, followed by a longer lasting negative wave at a 15-22 ms interval (Ruggiero et al 2021). The pathways from hippocampus to PFC are plastic. Paired pulses can produce both facilitation and inhibition, and tetanic input can result in both LTD and LTP, in some cases lasting up to 20 days (Taylor et al 2016).

Fibers from the subiculum of the hippocampus project to wide areas in the frontal brain, including the medial frontal cortex, the caudal cingulate gyrus, the amygdala, the septum, the hypothalamus, and the parahippocampal cortex (Rosene and Van Hoesen 1977). The connectivity is "extensive". The connectivity between the hippocampus and the amygdala has been studied extensively (Saunders et al 1988). Fibers from the basal, medial basal, and cortical nuclei of the amygdala project into the molecular layer of the hippocampal subfields, and in turn CA1 neurons send axons back into the medial basal nucleus and the ventral part of the cortical nuclei of the amygdala.

In the other direction, from prefrontal cortex to hippocampus, there are multiple pathways that are thought to stabilize and synchronize some of the oscillatory and phase-related relationships. In rodents, such a stabilizing influence is provided by the nucleus reuniens of the thalamus (Roy et al 2018). In humans and primates, synchronization between the hippocampus and the prefrontal cortex is detectable in the delta and theta range, from 3 Hz to 8 Hz. Disruption of this synchronization causes wide ranging impairments in performance, some of which can be specifically related to memory recall.

The hippocampus can generate its own theta rhythm, independently of the synchronizing influence from the medial septal nucleus. The role of the MSN is to shape and pattern the rhythm as it travels through the hippocampus. The coherence of intra-hippocampal theta has been studied, and is in general agreement with this principle. Thus in addition to any local phase encoding, there is also a global encoding at the level of the entire hippocampal population. During active and exploratory behavior, theta is present in the MSN, hippocampus, and prefrontal cortex, and there is coherence meaning at least a portion of the local rhythms are phase-locked. If all the rhythms were at random phases we wouldn't detect any theta at all. When an electrode array is inserted into the hippocampus, different electrodes show different amplitudes and different phases. Local hippocampal theta is related to voltage-dependent calcium currents in the dendrites of pyramidal cells. Input from the entorhinal cortex can drive these cells into an oscillatory mode (Buzsaki 2002).

There is also synchrony in the frequency spectra of hippocampus and prefrontal cortex, in the gamma range between 30 and 80 Hz. Hippocampal theta modulates the amplitude of PFC gamma (Sirota 2008, Tamura et al 2017). (The biologists call this "phase-amplitude coupling", to a radio engineer it would be simple amplitude modulation). Theta synchrony is dynamically modulated during spatial working-memory tasks, and phase locking between hippocampus and PFC is increased by correct choices. Gamma coherence increases with scene learning ("spatial reference memory"). Theta-gamma coupling is linked with increased performance in spatial working memory tasks. Synchrony in these pathways also occurs during sleep, which we won't get into.

This is quite a lot of information, isn't it? There's more! We could talk about the neurotransmitter profile, and how neuromodulators affect these pathways. The hippocampus and prefrontal cortex are loaded with receptors for serotonin, norepinephrine, dopamine, acetylcholine, endocannabinoids and endogenous opioids and a host of other neurotransmitters. Each of these has a specific effect on the circuitry. It's complicated stuff, one needs a modeling tool to keep track of it all. At this point though, the tools that are available to us (as ordinary mortals) start getting challenged by the complexity. Sometimes we find we have to move out of our PC's and into the cloud, where there's unlimited memory and unlimited computational power. There are some basic requirements for these tools. They have to be able to support various types of neurons behavior, including compartmentation. They have to be able to support various types of synaptic action, including neuromodulators. And perhaps most importantly, they have to support geometry, and topography. Everything in the cortex is modular, and that includes the hippocampus. Everything is topographic. Ultimately these relationships "determine" things like the theta-gamma coupling we see externally. If we disconnect the input from the medial septal nucleus, what kind of coupling will we see between independent hippocampal theta oscillators? Over what distance can coupling be detected? We'll look at some of these issues when we get to the Kuramoto model.

Meanwhile, let's move on. Now that we have an overview of how humans do it, let's take a look at how machines do it. Machine vision is an important area of mutual information for AI and neuroscience. We'll get into it in detail in the section on modeling, but first let's do a quick overview.

Next: Machine Vision |